Uppsala Architecture Research Team

Efficient Techniques for Predicting Cache Sharing and Throughput

This work addresses the modeling of shared cache contention in multicore systems and its impact on throughput and bandwidth. We develop two simple and fast cache sharing models for accurately predicting shared cache allocations for random and LRU caches.

To accomplish this we use low-overhead input data that captures the behavior of applications running on real hardware as a function of their shared cache allocation. This data enables us to determine how much and how aggressively data is reused by an application depending on how much shared cache it receives. From this we can model how applications compete for cache space, their aggregate performance (throughput) and bandwidth.

We evaluate our models for two- and four-application workloads in simulation and on modern hardware. On a four-core machine, we demonstrate an average relative fetch ratio error of 6.7% for groups of four applications. We are able to predict workload bandwidth with an average relative error of less than 5.2% and throughput with an average error of less than 1.8%. The model can predict cache size with an average error of 1.3% compared to simulation.

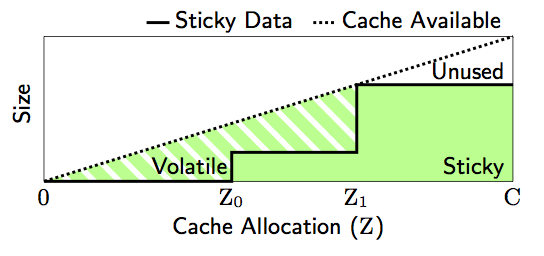

Cache behavior as a function of cache size.

-

Efficient techniques for predicting cache sharing and throughput

. In Proc. 21st International Conference on Parallel Architectures and Compilation Techniques, pp 305-314, ACM Press, New York, 2012. (DOI

. In Proc. 21st International Conference on Parallel Architectures and Compilation Techniques, pp 305-314, ACM Press, New York, 2012. (DOI , fulltext:postprint

, fulltext:postprint ).

).